Two Papers in CVPR 2022

Video Computing Group members have two papers in CVPR 2022. One paper is on designing dynamic multi-task architectures which is an oral (collaboration with NEC Labs) and another on context-aware adversarial attacks (collaboration with PARC)-

Multi-task learning commonly encounters competition for resources among tasks, specifically when model capacity is limited. This challenge motivates models which allow control over the relative importance of tasks and total compute cost during inference time. In this work, we propose such a controllable multi-task network that dynamically adjusts its architecture and weights to match the desired task preference as well as the resource constraints. In contrast to the existing dynamic multi-task approaches that adjust only the weights within a fixed architecture, our approach affords the flexibility to dynamically control the total computational cost and match the user-preferred task importance better.

Title: Controllable Dynamic Multi-Task Architectures

D. S. Raychaudhuri, Y. Suh, S. Schulter, X. Yu, M. Faraki, A. K. Roy-Chowdhury, M. Chandraker, IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), 2022

-

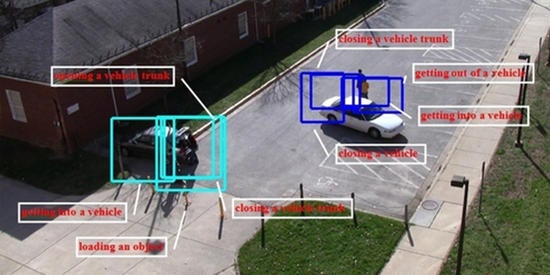

In this work, we developed an optimization strategy to craft adversarial attacks so that the objects on the victim side were contextually consistent and hard to detect using classifiers or detectors that were context-aware. This was done without querying the victim model at all, hence the term zero-query. Querying the victim model, even if for a few times, exposes the attacker to being detected, and therefore it is necessary to craft strategies that are query-free.

Title: Zero-Query Transfer Attacks on Context-Aware Object Detectors

Z. Cai, S. Rane, A. Brito, C. Song, S. Krishnamurthy, A. Roy-Chowdhury, M. S. Asif, IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), 2022